In its 2023 global survey on the current state of AI, McKinsey declared 2023 as the breakthrough year of generative AI (genAI). For many people, the launch of ChatGPT in November 2022 marked the start of an impressive journey where generative AI is transforming lives both on a professional and personal level.

Harnessing RAG: Empowering LLMs with Retrieval-Augmented Generation

However, generative AI has been around for many more years than that. One of the disrupting techniques in the field of generative AI that has caught significant attention from a business perspective is Retrieval Augmented Generation (RAG). RAG is a technique that augments the capacity of Large Language Models (LLMs) by combining elements of both retrieval-based models and generative models. In essence, with RAG, you can connect proprietary and external data sources, allowing to retrieve specific information that can be used as additional context for LLM’s to generate a desired output.

On a first level, this additional information retrieval allows for what is referred to as ‘ChatGPT’ing with your own data’. Essentially, with a RAG system, businesses can ask for relevant, company-specific information similar to how we use ChatGPT. On a higher level, this additional information can be used to generate a specific desired response or output, allowing businesses to generate text based on, or containing, company-specific information.

Core Components

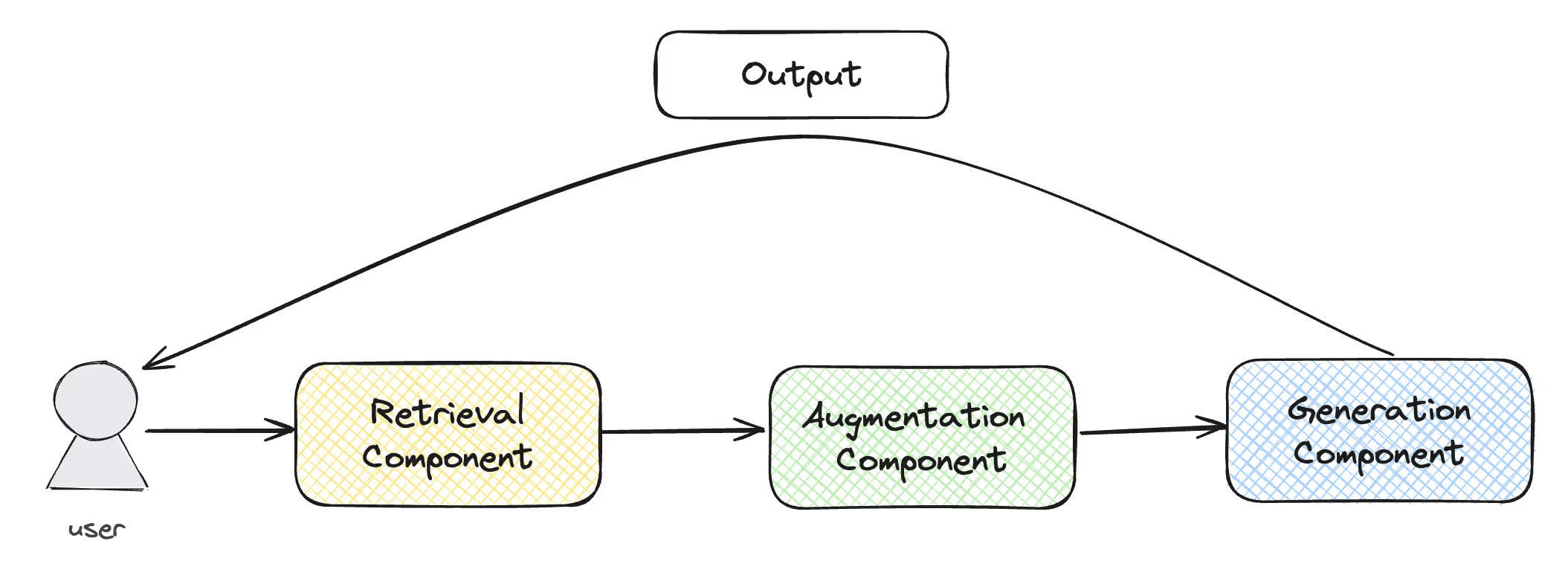

In its simplest form, a RAG system can be seen as an entity, consisting of three separate building blocks, as can be seen in the figure below. The process starts with a user that sends a request for information (a query) to the RAG system, triggering a response. The first building block or component is the retrieval component. The main purpose of the the retrieval component is to interpret the user query and to retrieve relevant information from specific data sources that likely match the intent of the query. After finding the relevant information, the additional context is sent to the second building block: the augmentation component. This component is responsible for augmenting (basically enriching) the initial query with the freshly retrieved information. Subsequently, the enriched query is sent to the generation component, the third and last building block. The generation component is basically an LLM that takes the augmented query as an input and produces, if all goes well, the desired output, which is based on the additional context provided by the retrieval component.

How RAG Works

But what is happening behind the scenes in each of these building blocks? Let’s have a closer look to really understand how a RAG system works by looking at each of these building blocks separately. Before diving in, let’s first take a step back and look at how to set the right foundations before building a RAG system.

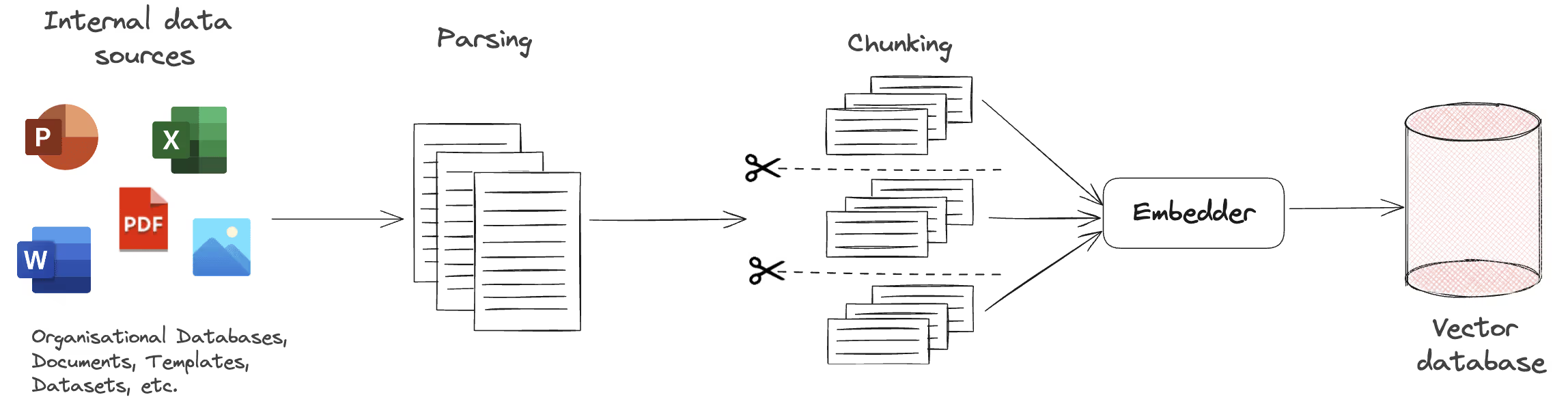

Ground Zero: Setting up the Vector Database

The vector database is the central database of a RAG system and contains preprocessed information from internal data sources. These sources van be any type of information that can be found within the company, like organisational databases, specific documents, templates, datasets, etc. The data can be stored in local files, cloud storage, data warehouses, etc. After locating, the data is parsed, which entails both conventional textual data preprocessing and converting non-textual data sources into text. Subsequently, the textual data is chunked, or split, into smaller, more manageable pieces. Having smaller pieces of textual data allow for more efficient information retrieval later on. After chunking, the chunks are converted to high-dimensional numerical vectors (embeddings) via an embeddings model. This numerical representation captures the meaning of the text chunk in a numerical way and is needed for the retrieval algorithm in order to do its job. These embeddings are then stored in a vector database, in a structured way that allows for efficient retrieval. This is called indexing, which is in essence a complex data structure that organizes and stores the embeddings in a specific way, depending on the indexing technique used. These techniques use specific vector properties, like proximity, dimensionality, or distribution in the vector space. The indexing structure ensures that information retrieval can be done in an efficient way.

Particularly important in setting up the vector database is ensuring that correct and sufficient metadata is available for every embedding as this metadata helps retrieving relevant information as will be seen later on.

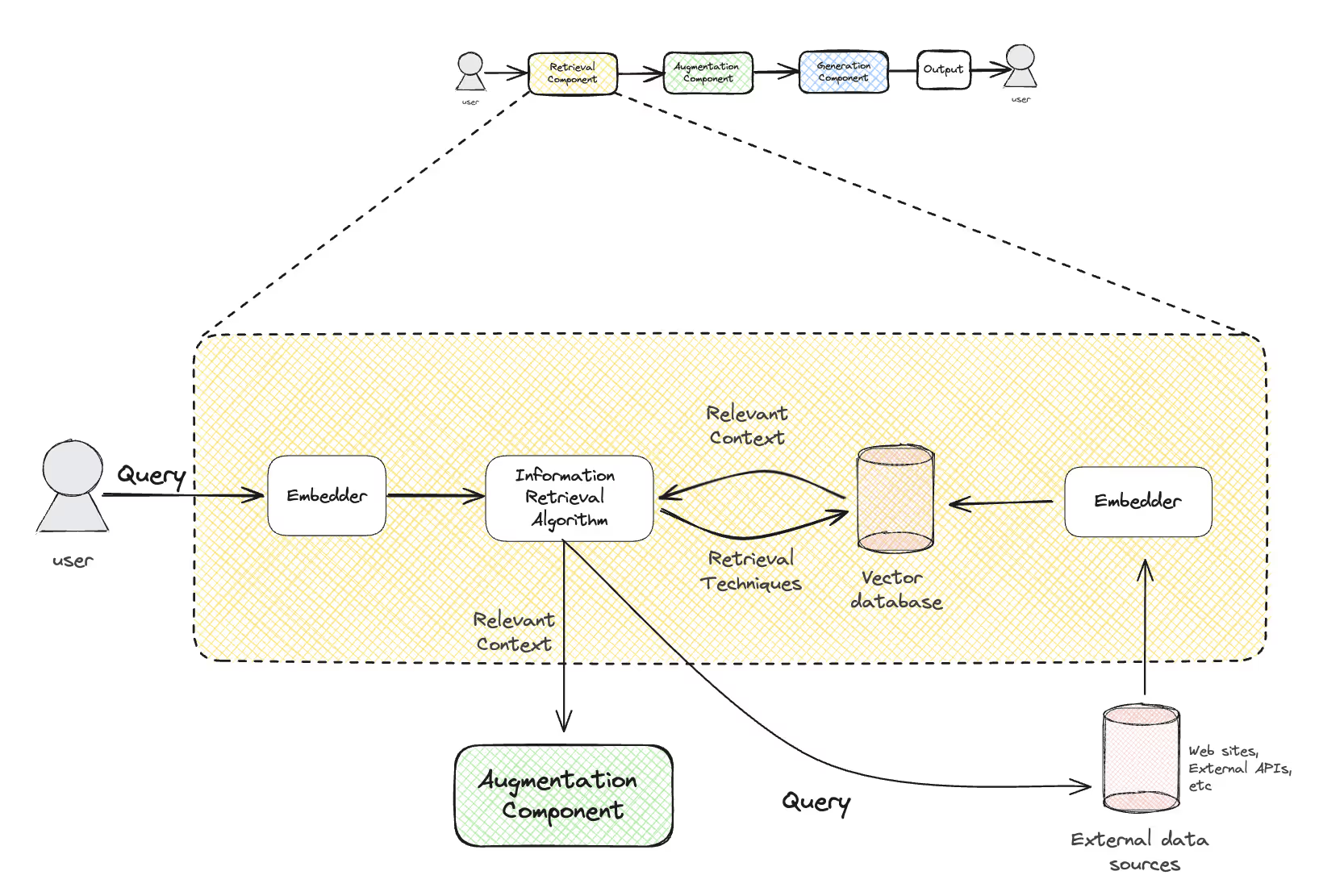

Retrieval component

As stated earlier, the main purpose of the retrieval component is to search and find the most relevant information, based on a specific user query. Although this idea seems trivial in our heads, it is a nifty and complex process to retrieve this information. As can be seen from the diagram below, it starts with a user query, triggering the retrieval component. The user query is converted into a numerical vector with the same embeddings model as before. Through retrieval techniques the information retrieval algorithm scans the vector database for information relevant to the user query. For efficiency reasons, the algorithm doesn’t scan the whole vector database, but rather uses the indexing structure that was explained in the previous paragraph. The index allows the system to quickly narrow down potential matches hence avoiding a full scan of the entire vector database, which is particularly useful in case of large vector databases.

But what does it mean to search for relevant information? Once the best potential matches are found via the indexing structure, a similarity metric is calculated between the potential match and the initial query. This metric measures the degree of relatedness via a specific formula. Popular examples of similarity metrics are cosine similarity and Euclidean distance. Subsequently, the potential matches are ranked based on their similarity scores and the top k, which can be specified, are selected as the results of the search for relevant information. The results can then be refined by comparing the metadata of the top k results with the initial query. If the initial query appears to contain specific criteria (like for example a date, an author, or a tag), the query can be matched against the metadata of the top k results and the system can filter or rank the results based on these criteria, which adds an additional relevance check, allowing for optimal retrieval.

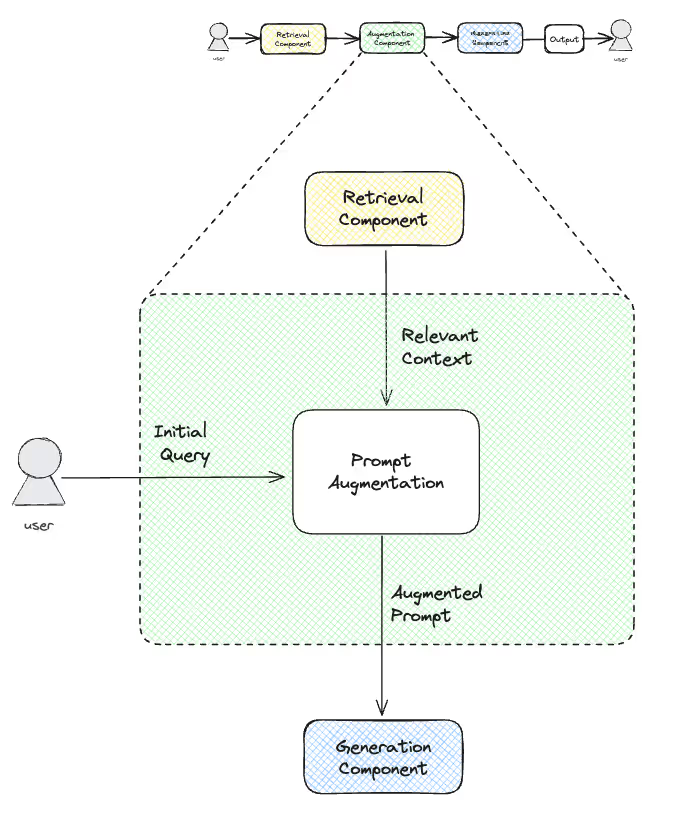

Augmentation Component

The augmentation component receives the relevant context from the retrieval component. It also takes the original query as an input. The purpose of this component is to act as a bridge between the retrieval and generation component by augmenting or enriching the original query with the additional information that was retrieved in the previous step. In essence, the augmentation component integrates the relevant context within the original query, combining and consolidating multiple chunks if needed.

From a technical perspective, augmenting the original prompt with additional information entails combining the embeddings through prompt engineering, which can be done in multiple ways. The easiest way to do so is by just appending or concatenating the retrieved embeddings. Other possibilities are, among others, interpolation, averaging, or taking a weighted sum of the embeddings. Before passing on the augmented query to the generation component, the augmentation component makes sure that the query is in the right format. To do so, additional steps like dimensionality reduction or ensuring semantic coherence through attention mechanisms might have to be taken. Subsequently, the whole augmented query gets transported to the third and last component, the generation component.

Generation Component

The generation component receives the augmented prompt from the augmentation component. In essence, the generation component is an LLM. This LLM can be a publicly available LLM, like ChatGPT, or a locally deployed open source LLM such as Meta’s Llama 2. Whereas publicly available LLMs are usually accessed through an API in a pay-as-you-go system, open source models can be downloaded for free and deployed locally on your own servers, which does imply that there will be costs related to computational resources.

Fundamentally, an LLM is trained to predict the next word in a sequence of words. To do so, LLMs are trained on a vast amount of data, mostly available on the Internet. Besides that, their architecture, extensive training, and attention mechanisms allow the LLM to understand the context and language used in the query, rather than probabilistically predicting the next word, which make these models extremely powerful. So after receiving the enriched query, the generation component addresses the LLM and the query is processes in order to understand its structure, content, and context. Subsequently, a coherent and contextually relevant output is generated, one word at a time, aligning with the information provided in the prompt. This output is than converted back to plain text, et voilà, the user receives an answer to his original query that is factually accurate, contextually relevant, and based on additional information, retrieved from a specific data source that is not common knowledge for LLMs.

The Importance of Data Quality

The performance of RAG systems is heavily depending on data quality, after all: garbage in, garbage out. Data quality is a crucial prerequisite for efficient and effective information retrieval, which is the starting point of RAG systems. High-quality data, structured and indexed efficiently in the vector database, increases the speed and accuracy of the information retrieval process. Moreover, it also reduces the computational complexity, as the well-structured vector database allows to quickly find potential relevant information. Besides retrieval efficiency, best-of-class data quality significantly improves the effectiveness of information retrieval. When setting up a RAG system, you want your system to retrieve only relevant and accurate data. High-quality (meta)data ensures that the information retrieved by the RAG system is accurate and relevant to the initial query. Irrelevant, inaccurate, or misleading information, as a result of poor data quality, can be detrimental for the quality of the generated output.

Several data quality tasks can lead to improved data quality to ensure that your data is in perfect condition to feed to your RAG system. Luckily, AI is here to help us automate these tasks (classification, tagging, matching, enriching, and many more) that are too often performed manually, typically prone to errors.

Conclusion

RAG systems have become increasingly popular over the last year, with new RAG-based applications launching almost on a daily basis, ranging from very generic RAG applications like Microsoft Copilot to custom-build applications, tailored at the specific needs of a sector, workflow, or company. As a small recap, RAG systems essentially combine the power of information retrieval algorithms and the text generation capabilities of LLMs, empowering businesses to quickly retrieve specific information and use this information in automated text generation processes. This significantly reduces manual work and boosts employee productivity. However, the importance of data quality cannot be neglected when it comes to the performance of your RAG system.

.avif)