In the first part of this series, we talked about the importance of validating the output generated by RAG systems. Output validation can happen on three levels: quantitative validation, qualitative validation, and a combination of both. In the first part, we focused on quantitative output validation for RAG systems using the RAGAs framework. The RAGAs frameworks includes metrics to validate three aspects of RAG output: information retrieval, output generation, and the end-to-end RAG pipeline. For each of the three aspects, RAGAs provides metrics to quantitatively assess the quality of the output, where quality can take multiple dimensions.

Decoding RAGAs: Understanding the Four Inputs of the Framework

Broadly speaking, the RAGAs framework takes four different inputs:

- Question: the query provided by the user of the RAG system

- Context: the information retrieved by the RAG system

- Answer: the output provided by the RAG system, based on the retrieved context

- Ground Truth: the true and ideal answer to the question

The first three inputs seem trivial to collect. However, what about the ground truth? The importance is clear: eventually you would want to compare the generated output from the RAG system with a reference answer that is deemed correct, given a specific question. Unfortunately, these ground truth datasets are not always readily available somewhere on the internet or within your company, at least not for specific use cases. In this blog post, we will deep dive in the different ways of constructing a ground truth dataset.

Note that the ground truth dataset is a combination of the above inputs. The ground truth dataset serves as a test set for output validation. In essence, it boils down to:

- Formulating a series of questions

- Selecting a specific context needed for the answer

- Answering the questions through one of the methods we’ll describe in a minute (which represents the ground truth)

- Feed the questions to the RAG system

- RAG system retrieves the relevant context based on the question

- RAG system formulates an answer to the questions.

The ground truth dataset contains the generated questions, ground truth answers, the context retrieved by the RAG system, and the answers produced by the RAG system. Combining all of these leads to a ground truth dataset, ready to be used for RAG output validation.

Different Ways of Building a Ground Truth Dataset

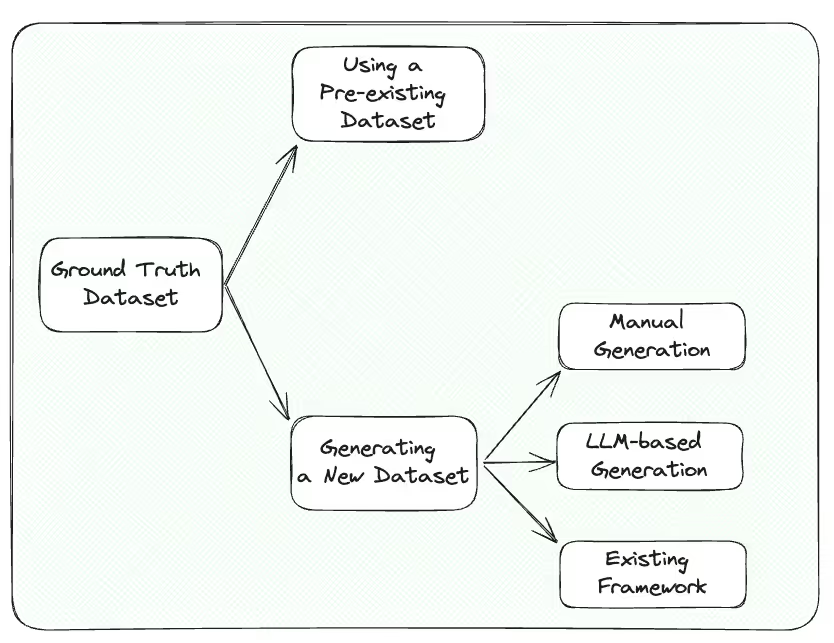

Broadly speaking, there are two ways to come up with a ground truth dataset for RAG output validation. On the one hand, you could use a pre-existing ground truth dataset, to be found for example on the Internet. On the other hand, you could opt to generate a new ground truth dataset. Within the latter, several options are available:

- Manually generating a ground truth dataset, using your domain knowledge

- Synthetically generating a ground truth dataset by asking an LLM

- Using common frameworks to generate a synthetic ground truth dataset

The figure below shows the different possibilities. Obviously, each method has its pros and cons in terms of effort, convenience, and truthfulness. Let’s have a look at each of them in turn.

Ground Truth Dataset

Method 1: Using a Pre-existing Ground Truth Dataset

Probably the easiest way of establishing a ground truth dataset is to use a pre-existing one. The internet is full of available datasets, like WikiEval, SQuAD, or HotpotQA. These datasets contain sets of questions and ground truth answers, ranging from several dozen to tens of thousands of examples that can be used to validate RAG output. The body of knowledge where the examples are generated from can be publicly available knowledge bases like Wikipedia or manually collected and written by researchers or businesses.

Using pre-existing ground truth datasets seems like a trivial choice. After all, those datasets are easily accessible, very cost and time efficient, and allow for easy benchmarking. However, there are some important drawbacks. The most important disadvantage is a lack of domain-specificity. Many of the available datasets are general-purpose datasets and they might not cover specific domains or use cases in sufficient depth. This specific domain is often the main reason why businesses want to build a custom RAG system instead of going for an off-the-shelf generic copilot application. One of the core challenges in RAG output validation is to effectively measure the system’s performance in its intended environment, which has important implications for choosing a ground truth dataset. In case of specific business applications, using a generic pre-existing ground truth dataset that is markedly different from your company-specific situation is probably not the best option. Although these datasets might prove to be a good starting point, solely relying on pre-existing datasets might not capture the effectiveness of your RAG system.

Method 2: Manual Ground Truth Dataset Generation

Another possibility for establishing a ground truth dataset is to manually construct one. Using domain expertise it might seem like a good choice to manually perform the following tasks:

- Come up with specific questions

- Manually select the context needed to answer the questions

- Craft an ideal answer to the questions

The big advantage of manually constructing a ground truth dataset is obviously the fact that you can tailor the questions to very specific situations and that you, as a human, are fully convinced that the answers to the questions are indeed the correct answers (something that you cannot necessarily say if you go for a pre-existing dataset). The obvious downside of manual ground truth dataset generation is the manual effort. Generating a dataset that is sufficiently large and accurate demands significant resource investment, limiting the scalability. Moreover, bias based on the creator’s perspective might be introduced in the dataset. Manual dataset generation seems like a valuable option for very specific domains and if the dataset to be constructed should not be too large.

Method 3: LLM-based Ground Truth Dataset Generation

Another interesting way to come up with a ground truth dataset is to seek help from an LLM. This method leverages the LLMs reasoning and generation capabilities. By feeding your knowledge base to an LLM and asking it to generate sample questions and accompanying answers, a ground truth dataset can be constructed relatively easy. The most important advantages are scalability and efficiency. LLMs can easily generate large volumes of questions and answers quickly, allowing to create substantial datasets in a short time period. By tuning the prompt, you can ask for a diverse range of questions and tailor the types of questions and answers such that they are closely aligned to your specific use case. Moreover, LLM-generated ground truth dataset can offer a consistent format and structure, which tends to be beneficial for systematic training and evaluation of your RAG system.

However, there are some important drawbacks. First of all, there are concerns regarding quality and accuracy of the generated questions and answers. There isn’t really a way to validate the quality of the generated dataset besides manually reviewing them, which might take some time, although it’s definitely faster than manually constructing the dataset. Besides that, there is a limited depth of understanding. LLMs are great tools and more than proficient in generating content based on surface-level patterns, but they can not match humans (yet) when it comes to understanding deeply technical, nuanced or very domain-specific matters. This might limit the relevance of the generated dataset. Next to that, LLMs are by default not good at creating a diverse set of samples as they tend to follow common paths. Lastly, LLMs do present context window limitations, meaning that you will probably not be able to feed your whole knowledge base to the LLM at once. You might have to split up the knowledge base in smaller, more manageable pieces in order for the LLM to perform well in generating questions and answers. There is some kind of trade-off between chunking the knowledge base into bigger pieces and the relevancy of the questions and answers generated, similar to how chunking the knowledge base before embedding affects RAG performance, although at a different scale. If the content you feed to the LLM is too large, you might risk to let the LLM generate questions and answers that are too superficial. On the other hand, making the chunks smaller allows for more in-depth questions and answers, but this significantly increases the workload.

The process described above entails manually uploading documents in an LLM web interface and manually crafting prompts to generate questions and answers based on the uploaded documents. However, this LLM-based ground truth dataset generation can also by automated by writing a script that basically performs the following steps:

- Prepare RAG knowledge base and embed in a vector database

- Pick a (random) sample and use it as context

- Generate a specific number of questions for the random sample

- Repeat step 1 to 3 for a specific number of (random) samples

In this way, the need for manual effort is significantly reduced, increasing the efficiency of ground truth dataset generation.

Method 4: Synthetical Ground Truth Dataset Generation with Existing Frameworks

The fourth and last possibility for establishing a ground truth dataset is to use existing frameworks to assist you in automatically generating the ground truth dataset. Frameworks like RAGAs provide tooling for this matter. RAGAs takes a specific approach for generating the ground truth dataset, building upon the shortcomings of LLM-based generation, as discussed above. RAGAs implements an evolutionary generation paradigm. Essentially, this implies that RAGAs uses a methodology for systematically creating questions and ground truth answers that are based on iteratively refining and developing questions based on the context provided. This approach mimics evolutionary processes to systematically generate diverse and challenging question, hence the name evolutionary generation paradigm.

More specifically, within the synthetic dataset generation component of the RAGAs framework, an LLM is asked to generate a set of simple questions that is iteratively refined into more complex questions. The following methods are used:

- Reasoning: involves rewriting the questions in a way that increases the need for reasoning to effectively answer the questions

- Conditioning: entails modifying the questions to introduce a conditional element, adding complexity to the questions

- Multi-context: implies rephrasing the question in such a what that it needs information from multiple related context chunks to compile an answer

Moreover, RAGAs does not solely transform the simple questions into more complex ones following the methods above. The framework also provides the capabilities to reshape the questions into a conversational flow, through the evolution process. Hence, the final output of the synthetic ground truth dataset generation is a set of questions and answers that resemble a Q&A chat-like conversation. RAGAs also allows to choose a custom distribution of question types, for example 50% reasoning questions, 20% conditioning questions, and 30% multi-context questions.

The big advantage of leveraging RAGAs for synthetic ground truth dataset generation is the fact that more complex questions allow for a more thorough evaluation of the RAG system in terms of reasoning capabilities, contextual understanding, and handling complex queries. The downsides of this method involve the potentially high complexity to set up and computational resources required for implementing the evolutionary algorithms.

Side-be-side Comparison

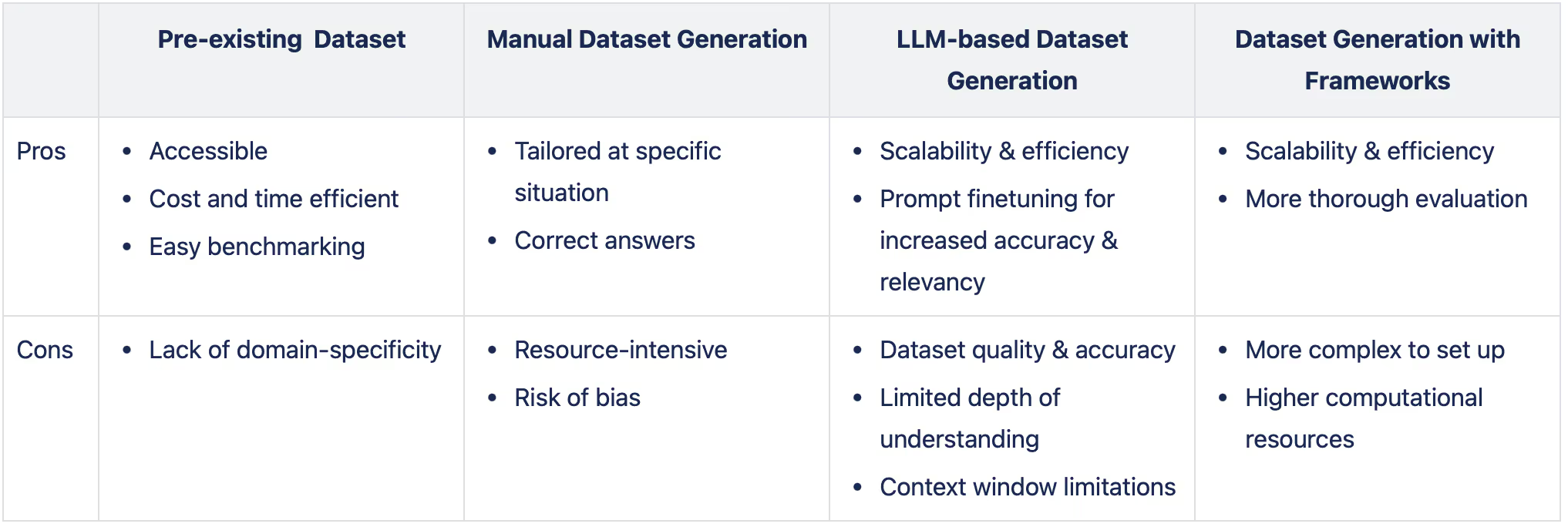

The table below summarises the most important advantages and drawbacks of the different methods for generating a ground truth dataset. Although every method has its own specific pros and cons, we do favour generating ground truth datasets by using the tooling available in existing frameworks. However, the choice for which method to pick does depend on your specific situation or use case, available resources, and the extent to which you are willing to rely on output produced by LLMs.

Conclusion

In brief, there are different ways of establishing a ground truth dataset for RAG output validation, all with their specific pros and cons. There is no one-size-fits-all solution and picking one method (or even combining different methods) requires careful consideration. After generating the ground truth dataset, RAG output can be validated in a quantitative way, as we discussed in our previous blog post. In the next part of this series, we’ll dive into human feedback for qualitative output validation and LLM-based feedback as a combination of both quantitative and qualitative output validation. Stay tuned!

.avif)